Nationscape™ is one of the largest public opinion projects ever conducted — gathering insights from more than 6,000 interviews with Americans every week and about half a million over the course of the project. However, even with large sample sizes, public opinion surveys always beg the question, “How much do the results reflect reality?”

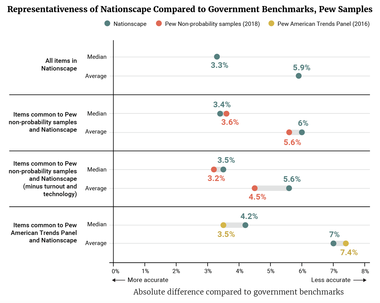

In order to evaluate the quality of the Nationscape methodology, Lynn Vavreck, Chris Tausanovitch, and their team of researchers at UCLA conducted a representative assessment. In essence, they replicated the Pew Research Center’s work, which compared estimates derived from large, reliable government surveys to estimates drawn from online non-probability surveys.(1)(2)

(1) Courtney Kennedy, Andrew Mercer, Scott Keeter, Nick Hatley, Kyley McGeeney, and Alejandra Gimenez, “Evaluating Online Nonprobability Surveys,” Methods, Pew Research Center, May 2, 2016, Accessed December 6, 2019. Available at: https://www.pewresearch.org/methods/2016/05/02/evaluating-online-nonprobability-surveys/.

(2) Andrew Mercer, Arnold Lau, and Courtney Kennedy, “For Weighting Online Opt-In Samples, What Matters Most?” Methods, Pew Research Center, January 26, 2018, Accessed December 6, 2019. Available at: https://www.pewresearch.org/methods/2018/01/26/for-weighting-online-opt-in-samples-what-matters-most/.

So, for example, we might compare the number of people who say they have a driver’s license on a government survey to the number who said the same for Nationscape. Doing this across a wide variety of questions that cover a spectrum of topics allows researchers to assess the overall quality of a survey.

The result? The median difference between Nationscape’s estimates and the government benchmarks was 3.3 percentage points. This is similar to the 3.6 percentage point difference between Pew’s online non-probability samples and those same benchmarks.

To learn more, read about Nationscape’s methodology and the representative assessment here.

Robert Griffin is the research director of the Democracy Fund Voter Study Group.

Lynn Vavreck is the Marvin Hoffenberg Professor of American Politics and Public Policy at UCLA. Chris Tausanovitch is an associate professor of political science and vice chair of the UCLA Political Science department. Derek Holliday, Tyler Reny, Alex Rossell Hayes, and Aaron Rudkin are UCLA researchers. Views expressed here are their own and do not necessarily reflect those of the collective Voter Study Group.

Subscribe to our mailing list for updates on new reports, survey data releases, and other upcoming events.